As of yesterday, I’ve got a new laptop (woop woop I7-7700HQ)! Obviously that meant transferring data over though a bit of WiFi and an overnight transfer deemed me ready to rock this morning. I do however have an exam in a week which I should probably start revising for so this will be my last post for a bit…

One major step of stereo vision is image rectification. Image rectification is a process where two images are linearly transformed so points within one line of pixels of one image corresponds to the pixels in the same line of the other image – shifted a bit due to where the image was taken. This allows you to search for the pixels from the left hand image in the right right hand image and only have to search along one dimensions, reducing the search time required and allowing higher throughput than otherwise. There is a lot of math involved in image rectification but it eventually boils down to a 3×3 transformation matrix known as the fundamental matrix for each camera. This matrix transforms an input image into a rectified output image and if both images are transformed, the two output images will be rectified.

A 3×3 transformation matrix is a great mathematical way of describing how pixels of a pre-transformed image map to pixels of a post-transformed image. When the transformations consist of rotations, scalings, translations and shears, they are known as affine transformations.

When using Matlab to calibrate two images, a fundamental matrix is generated for each camera and as long as the spacing between cameras and other specific parameters don’t change, these matrices will be suitable for all images. It is this transformation that will need to be applied to an image stream to generate the output rectified image.

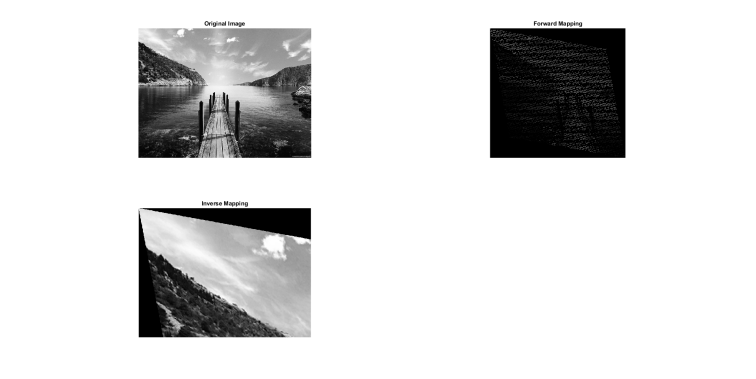

Forward mapping

The simplest way of generating a rectified image from a standard rectangular (e.g. 640×480) image would be to go through each source pixel and see where in the destination image it should occur. This is really straight forward and consists of multiplying the homogenized x,y source co-ordinates with the transformation matrix and moving this source pixel to its destination. This however has issues with scaled transformations as you end up with lots of blank space for where pixels from the source didn’t map to. This kind of transformation would require interpolation to fill in the blank spaces, further increasing processing time.

Forward mapped transformation from Matlab using a scale of 3x (along with some shear and rotation).

Forward mapped transformation from Matlab using a scale of 3x (along with some shear and rotation).

As can be seen in the image above, the forward mapped image is full of blank space and doesn’t make up a good image. This isn’t an issue with scales <1.

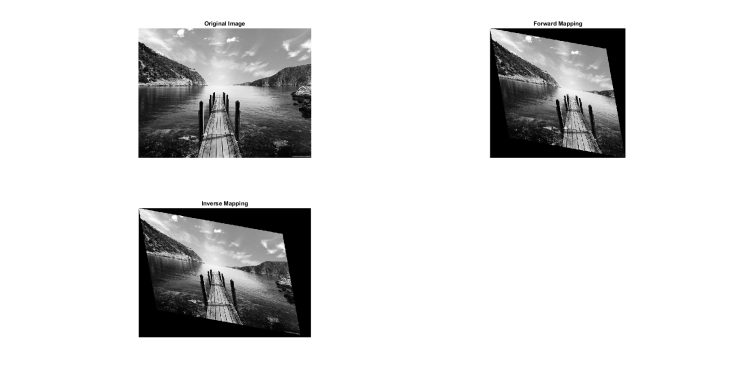

Transformation for a scale of 0.5 (with shear and rotation). Note the forward mapped image is completely filled in the useful region.

Transformation for a scale of 0.5 (with shear and rotation). Note the forward mapped image is completely filled in the useful region.

There is also the issue of how large the output image will be. One could iteratively find out the maximum X and Y outputs or go through some mathematical proof but this is reasonably tedious.

Inverse Mapping

The better solution to this issue is to use what is known as inverse mapping. Instead of working from the source to the destination, you instead work backwards. From the destination image, for each pixel, find its source co-ordinate. The inverse mapping transformation matrix is literally the forward transformation matrix, inverted. 3×3 matrix inversion is relatively straightforward (to the point where I’ve had to do it in exam settings!) so as long as an inverse exists, calculating it isn’t a particularly hard task.

The next step consists of generating the output image. Say for example the original image is a 640×480 image, its most likely that you will want your output image to also be 640×480. Therefore, for every pixel from [0,0] to [639,479], you will want to inverse transform the pixel co-ordinate and see whether a pixel at the inverse co-ordinate exists. if no pixel exists (i.e. trying to find the pixel [-10,-43]), you can decide what to do in the output. For my version of inverse mapping, I chose to set this output pixel to zero. Once this has been done across the whole output image range, the transformed image will be presented. This method naturally replicates pixels in blank spaces and is a form of nearest neighbor interpolation. The issue here however is that you can’t inverse map pixels that are arriving in a stream as you need to access pixels of the future and pixels that may have passed. If the transform is known to only occur over a small X/Y range, a buffering system can be developed (like this guy). To ease implementation, I decided to go down the “noob” approach of inverse mapping from memory back into memory. This will result in lower than expected frame rates but is really easy to program and is low on memory consumption. Its worth bearing in mind that UART frame transfers is still the limit!

Results

For my affine transformation, I decided upon a rotation of 10 degrees, a shear in the X direction of 20 degrees and a scale of 0.8 in both X and Y. I then implemented the transformation matrix as 6bit fixed point (more bits can be used if required!) ensuring all multiplications were on integers and divisions could be implemented as shifts. This was logic friendly and ended with my image transformation module consuming 282 logic cells. This did require a modification to my memory handler though to allow for an additional slave. The memory handler now services the pixel in FIFO (highest priority), the UART Handler (second highest priority due to low frequency of read requests) and the image transformation module (lowest priority due to high frequency of reads and writes).

Matlab result of the transform on a test image.

Matlab result of the transform on a test image.

Received transformation image with original transformation image

Voila, success! All I need to do now is wait for my dual camera PCB to arrive….

Soon to be a depth camera!

Soon to be a depth camera!

I’m not going to upload my code onto my Github until I’ve properly sorted it out – its a complete mess at the moment!

Hello Sir,

I’m working on video rotation with FPGA, with input video resolution from 10*10 pixel to 1920*1080pixel size and frame rate of 50 frame/s.

In my design, input image first store in DDR RAM and read pixel of source frame to generate destination frame.

For rotation i used inverse mapping and generate source pixel address.Because the lake of BRAM in FPGA for buffering I read image in Tile manner from DDR RAM and cache it inside FPGA BRAMs then find respective destination pixels of that tile.

this algorithm have a lot of overhead and i think there is a better way for rotation of video with FPGA using DDR RAM and without using External SRAM.

Would it possible for you to guide me and explain how do you overcome with memory shortage in FPGA for rotate

large image?