Image compression is a massive topic and to be honest, a bit of a buzzword title. Images can be compressed in multiple ways. One of the simplest being reducing the bit depth of the image. Reducing the bit depth is a pretty poor method of compression though and generally produces weird banding results as could be seen in my earlier post. People way more intelligent than myself then developed a variety of methods to try and compress images further. It turns out that humans have higher sensitivity to the brightness of a pixel as opposed to the colour due to the ratio of rods and cones in the eye. Because of this, you can transform an RGB image into a domain that separates the colour from the brightness. I mentioned this in my image segmentation post where I talked about transforming to the YCgCo domain and segmenting based on colour there.

The JPEG compression standard involves converting from RGB to YCbCr and compressing the blocks in this domain instead. Because the eye is much less sensitive to colour, a method known as subsampling is used. In a fully sampled pixel, each pixel has a Y component, a Cb component and a Cr component. In a subsampled pixel however, the Cb and Cr components are shared between pixels. This post has a really good pictoral example of how this is done.

While colour compression is one method of reducing image size, another is based on trying to maintain the quality of an image while reducing the size using psychological properties of human vision. This method assumes that the eye can still perceive an image reasonably well which has been somewhat blurred (i.e. sharp corners/edges/features of the image have been removed). By blurring the image and transforming it into another domain (a different one to the colour transform above!), the image can be represented with way fewer pieces of data than before. This method of compression is based on the DCT – a transform similar to the DFT that only operates on real data i.e. pixels. The DCT is a reversible transform meaning data that is transformed into the DCT domain can be transformed back perfectly assuming no loss of precision (I’m looking at you floating point!). One advantage of the DCT however is that it is reasonably insensitive to precision loss meaning data transformed into the DCT domain, bit crushed and transformed back will look “pretty much” the same dependent on how much bit crushing – and therefore data loss happened. This Matlab article also explains how this applies to images with an example at the bottom. If an 8×8 block of pixels is transformed into the DCT domain, the result will be an 8×8 block of real values. In most standard images, you end up with a lot of the data in the top left hand side of the DCT domain data. From this, you can essentially set all values below some threshold to zero, reducing the amount of data present. Executing the inverse DCT on this data will recover the original pixels though they will look “blurred” due to the data that was removed in the DCT domain. As it turns out, values of the DCT domain data furthest from the top left corner correspond to higher frequencies present in the original data. Removing these “higher frequency” components is equivalent to low pass filtering the original data, hence the blurriness.

Whew! That was quite a paragraph. The DCT is an even better transform as it can be implemented as a matrix multiplication. FPGAs are also very well suited to matrix multiplication due to their inherent parallel requirements. Performing a 2D DCT is literally equivalent to taking a DCT matrix, multiplying it and the input pixel block, then multiplying that result by the transpose of the DCT matrix. The inverse can then be executed with a similar operation.

DCT Compression implementation

I implemented some image compression using the DCT in my FPGA for the OV7670. I managed to achieve 20fps with a compression ratio of 10.67:1 for the low quality version and 4.74:1 for the higher quality version.

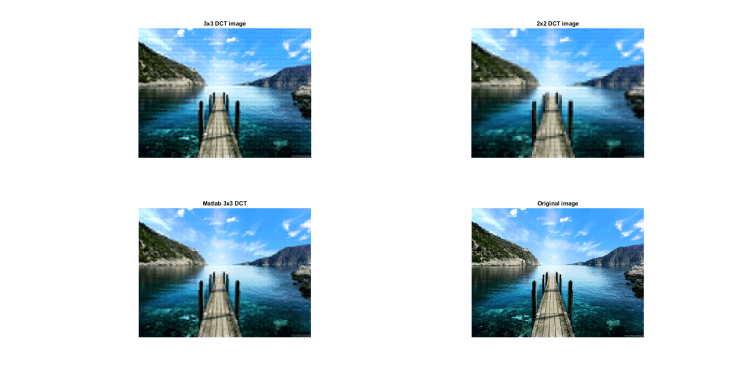

To make sure my code worked, I wrote a quick testbench that read raw pixel values from a file and streamed them into the DCT compression module. I then took the output from the DCT module and wrote these to another text file to then be imported into Matlab. In Matlab, I then regenerated the original blocks from the DCT coefficients along with executing the same operation in Matlab using higher precision variables to understand how low precision DCTs perform visually.

DCT compressed images from my model and Matlab.

DCT compressed images from my model and Matlab.

The compression module takes an 8×8 block of pixels and calculated the first 3×3 DCT values, assuming the rest would be set to zero. It is here that the compression occurs. While I could have written a DCT module capable of generating a full 8×8 DCT of the 8×8 block of pixels, I instead only calculate the first 3×3 values as the rest of the values would likely have been below zero. The DCT is calculated using 6bit fixed point precision DCT coefficients which is less than I would’ve liked but also keeps the logic usage down. This low precision of coefficients can be seen as artifacts in the 3×3 output from my module as opposed to the 3×3 output from Matlab. For reference, the module expects pixel colour inputs to be 5bit too. For an RGB565 stream, this isn’t an issue as both R and B will already be 5bit. For the G channel, a bit of data is lost, making the input pixel equivalent to RGB555. This data loss could’ve been executed by the OV7670 but losing a single bit doesn’t take any additional logic.

As can be seen, this is some pretty heavy compression as the output images compared to the original are pretty blurred (especially the 2×2 DCT image). It kinda reminds me of watching a 144p youtube video!

Obviously the next step after Matlab verification was to implement the actual module in my FPGA and get some compressed images out! This was quite an intensive step as I needed to add other components. The main addition was writing a module that could input an extra 8*640 pixels to ensure all DCT blocks get written to the output. I also needed to limit the maximum frame rate that the camera operated at to 20fps. With a pixel clock of 16MHz, pixels are emitted from the OV capture module at 8MHz. Each DCT block is generated after 8 pixels have been pushed through 8 line buffers. This means that for every row, a DCT block needs to be generated every 8 pixels. With a pixel clock of 8MHz, this gives the DCT module 1us to generate the 3×3 DCT values. From simulation (and state counting), it turned out that it takes 59 cycles from input of an 8×8 pixel block to output of a 3×3 DCT block. This means that the module needs to run at 59/1us = 59MHz to keep up with the input pixel rate. Knowing this, I chose to run the DCT module at 60MHz – along with changing the entire capture clock domain to this frequency too. This included changing the pixel FIFO to a dual clock version with the write clock at this new frequency.

Synthesis

Synthesis takes much longer now. I need 3x of these DCT modules, one for each colour channel. This also means another block is required to take the DCT module outputs and write them to the system SDRAM. The below results are for the entire system.

Total logic elements: 4459/6272 (71%)

Total registers: 3501

Total memory bits: 111560/276480 (40% – this includes the pixel FIFO)

Embedded Multiplier 9bit elements – 24/30 (80%)

Pretty resource intensive!

The camera capture domain clock on a slow 85deg model is capable of running at 62.35MHz, higher than my 60MHz target.

Results

After a bit of modification to my normal C# program (including implementing the IDCT!), I was able to get some DCT compressed images out.

DCT Compressed images. Top left is uncompressed, top right is compressed with a 2×2 block size, middle right is a 3×3 block size for green with 2×2 block sizes for red and blue (poor poor chroma subsampling!) and bottom is a 3×3 block size.

I used an object with text on for this test as this allowed me to clarity of the test post compression. While the quality isn’t exceptional, I am able to massively reduce the image size which increases speed of transfers through UART. I’m now limited by the slowness of C# as opposed to UART limited. Lets throw a bit of math down:

UART at 6MBaud = 6M/10 = 600000byte/s

Standard 640×480 16bpp frame size: 640*480*16/8 = 614400 bytes

640×480, 16bpp frame rate: 600000/614400 = 0.98fps

Standard 320×240 16bpp frame size: 320*240*16/8 = 153600 bytes

640×480, 16bpp frame rate: 600000/153600= 3.91fps

Compressed 640×480 for 2×2 blocks: 640*2/8*480*2/8*3 = 57600 bytes

Compressed 640×480, 2×2 frame rate: 600000/57600 = 10.42fps

Compressed 640×480 for 3×3 blocks: 640*3/8*480*3/8*3 = 129600 bytes

Compressed 640×480, 3×3 frame rate: 600000/129600 = 4.63fps

As can be seen above, both compressed images can achieve a frame rate higher than the 320×240 16bpp image proving the effectiveness of DCT compression! Obviously if you mix DCT compression and chroma subsampling (in the YCbCr domain), you can achieve much better compression ratios. Throw in some form of bit stream compression (huffman coding/RLE) and you’re pretty close to how JPEGs are made. It is worth noting that a 320×240 pixel image with 2×2 block size gives a frame size of 14400 bytes. This kind of frame could be wirelessly transmitted using a standard nRF24L01 achieving 17fps! Do I hear a wireless camera? Further expanding on this, the maximum UART rate for the ESP8266 has been tested at 20MBaud. While this allows data transfers into the ESP8266 to be fast, the ESP8266 to the actual internet is much slower though this poster states managing to achieve 8Mbit/s. With this data packet, this could achieve optimal condition frame rates of 69fps! A full 640×480 3×3 block size frame could be sent at 7.7fps. These numbers are only under ideal conditions but are still pretty cool!

Image processing

I probably should’ve done a separate post for each but hey! Relating to the previous paragraph where I spoke about changing colour domains (RGB -> YCbCr/YCgCo), colour based image processing can be done much easier in these transformed domains. By changing the ratio of Y to the chrominance channels, colours can be more or less saturated. The images from this camera seem pretty washed out so I had a go to see what happened when I saturated the Cg and Co channels. All saturation was done in software on the C# side though doing this in the FPGA wouldn’t be hard.

Original photo with saturation of 2x on orange and green channels separately

Saturation of 4x on orange and green channels separately

Saturation of 2x and 4x on both channels simultaneously

Saturation of 0.5x on orange and green channels separately

Saturation of 0.5x and 0.25x on both orange and green channels

Saturation of 16x on both channels, check that noise out!

Image subsampling

For the below images, the chroma channels were subsampled by varying amounts

Original picture with 4x subsampling on both Cg and Co

Original picture with 8x subsampling on both Cg and Co, note the artifacts on the left

Original picture with 16x subsampling on both Cg and Co, Even more artifacts are visible!

Original picture with 8x subsampling on both Cg and 16x subsampling on Co, Less artifacts are visible here as they eye is less sensitive to Co than it is to Cg.

The above sub sampling methods could be integrated to further reduce image size and increase compression ratios!

Well that was certainly a long post! I really should’ve been revising but I had to take my car into the garage and have been waiting for a phone call. Code will hopefully be on Github when I’ve cleared it all up!

I have the same issue with the green colour: there’ s something wrong about it, and I can’ t really figure out what it is. My red and blue channels work, so why green looks clipped?

Thank you!